AI Native Readiness: Beyond Bolted-on AI Solutions

Over the past year, I’ve had lots of conversations with CTOs and product leaders about AI readiness. Almost every organization can point to something. A pilot. A chatbot. An internal coding assistant. A proof of concept built on top of a foundation model.

AI readiness often asks how we can use AI. Whether we have access to models. Whether we’re able to conduct experiments, learn new tools and integrate some type of AI functionality into an existing product experience.

AI-native readiness asks something more fundamental. Are our systems, teams, data models, and governance frameworks designed for continuous model-driven evolution? That distinction matters.

Bolting AI onto an existing product can generate short-term gains. But if your architecture tightly couples logic to a single provider, if your data pipelines are not instrumented for feedback, if your evaluation loops are informal, and if security controls were designed for deterministic systems, you will plateau quickly.

AI-native organizations design for adaptation. They assume models will improve monthly. They assume workflows will shift. They assume evaluation and governance are ongoing responsibilities, not launch-phase checklists.

As technical leaders, this is not about chasing hype. It is about making durable architectural and organizational decisions. AI-native readiness is a better measure because it reflects whether your organization can compound value from AI over time, not just demonstrate it once.

We break it down across six dimensions:

1. Product Architecture Designed for Model Evolution

AI-native products assume models will change. Models improve. APIs evolve. Pricing shifts. Providers consolidate. Your architecture should reflect that reality. AI-native systems abstract model layers. They separate orchestration from inference. They support component swapping. They log structured interactions for retraining and evaluation.

In practice, this means:

- Clear boundaries between business logic and model interfaces

- Observability at the prompt, response, and decision layer

- Versioned prompt and evaluation frameworks

- The ability to test multiple models against the same workflow

If replacing a model requires refactoring large portions of your system, you are not operating in an AI-native posture.

2. Data as a Strategic Asset, Not an Afterthought

AI-native readiness goes beyond asking whether data exists. It asks whether data is usable, governed, and connected to feedback loops.

You need:

- Structured and unstructured data pipelines

- Clear ownership of data domains

- Mechanisms to capture user interactions for improvement

- Instrumentation that ties model outputs to business outcomes

For example, in a clinical triage workflow, it is not enough to generate recommendations. You must track recommendation accuracy, escalation rates, and user overrides. That feedback must flow back into evaluation. AI-native organizations treat data flows as part of the product surface, not background infrastructure.

3. Engineering Workflows Built Around AI

AI-native readiness also changes how software is built. Engineering teams should be using AI in development, testing, and QA in a systematic way.

This includes:

- AI-assisted code generation and refactoring.

- Automated test generation and regression validation

- LLM-on-LLM review for critical logic paths

- Prompt libraries treated like versioned source code

This is not simply about velocity. It is about building teams that assume intelligent tooling is embedded in the workflow. If your engineering process looks unchanged from several years ago, you are likely under-leveraging what AI-native development enables.

4. Operational Maturity and Continuous Evaluation

AI systems are probabilistic. They drift. Edge cases accumulate. Performance changes over time. AI-native readiness requires operational discipline.

You need:

Formal evaluation frameworks

- Benchmark datasets aligned to real use cases

- Continuous monitoring of latency, cost, and output quality

- Clear rollback and fallback strategies

In practice, this can mean shadow testing new models before promotion to production. It can mean regression suites that evaluate AI outputs against curated scenarios. It can mean cost observability tied directly to specific workflows. Without this layer, pilots rarely mature into reliable systems.

5. Organizational Capability and Cross-Functional Alignment

AI-native readiness is not just technical.

Product, engineering, data, compliance, and security must align on:

Acceptable risk thresholds

- Explainability requirements

- Human-in-the-loop design

- Escalation paths for model failure

You need leaders comfortable designing probabilistic systems. You need engineers who understand distributed architectures and model behavior. You need product teams who can define success metrics for non-deterministic outputs.

Without cross-functional clarity, AI remains isolated.

6. Data Security and Model Governance by Design

AI introduces new attack surfaces.

Prompt injection. Data leakage. Model inversion. Third-party API risk.

AI-native readiness requires:

Strict data segmentation

- Clear PII handling policies

- Vendor risk assessments specific to AI providers

- Audit trails for model decisions

- Encryption and access controls at every inference boundary

In regulated industries, this is foundational. If you cannot clearly trace how data moves through your AI workflows, you should not scale them.

Security cannot be retrofitted onto AI systems built without governance in mind.

Advice for CTOs

AI-native readiness is not about having the latest tools. It is about whether your organization is positioned to continuously build, evaluate, and evolve intelligent systems.

As CTOs, we are responsible for durable systems. Systems that adapt without becoming fragile. Systems that compound value rather than degrade.

AI-native readiness is a structural lens. It forces us to examine architecture, data, workflows, operations, organizational alignment, and security as an integrated system.

That is a more meaningful measure than whether we have launched an AI feature. And in the current environment, it is the measure that will separate experimentation from sustained advantage.

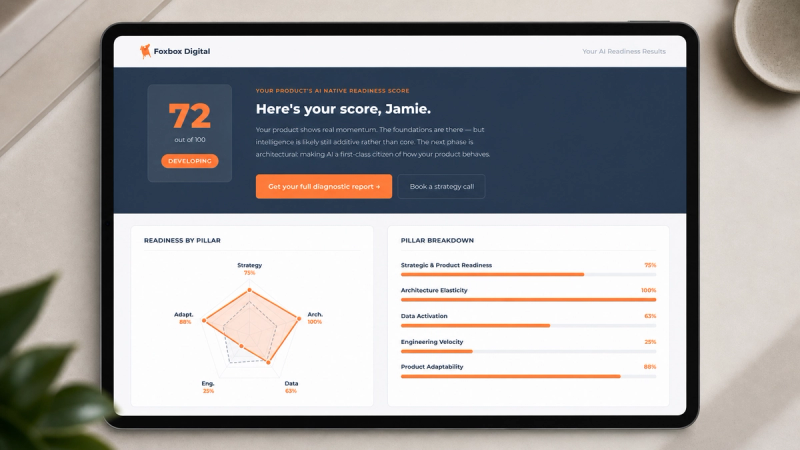

Take the self assessment

Take the free self-assessment and begin your journey to AI-Native products with clarity. 3 mins, 1 unified score across, 5 critical dimensions. You might be surprised what you find. Click here to get started.

Elliott Torres

Elliott is the CTO of Foxbox and serves as Fractional CTO to our clients. He is responsible for setting the technical and delivery standards of our product design & development teams. With over 30 years as a solution architect and technology executive, Elliott’s known for leading highly successful AI & ML initiatives, particularly in healthcare. Elliott’s track record as an outcome-focused technical leader is invaluable to the Foxbox team. Read more about Elliott Torres